Python RequirementsĪt its core PySpark depends on Py4J, but some additional sub-packages have their own extra requirements for some features (including numpy, pandas, and pyarrow).

#Pyspark anaconda windows install

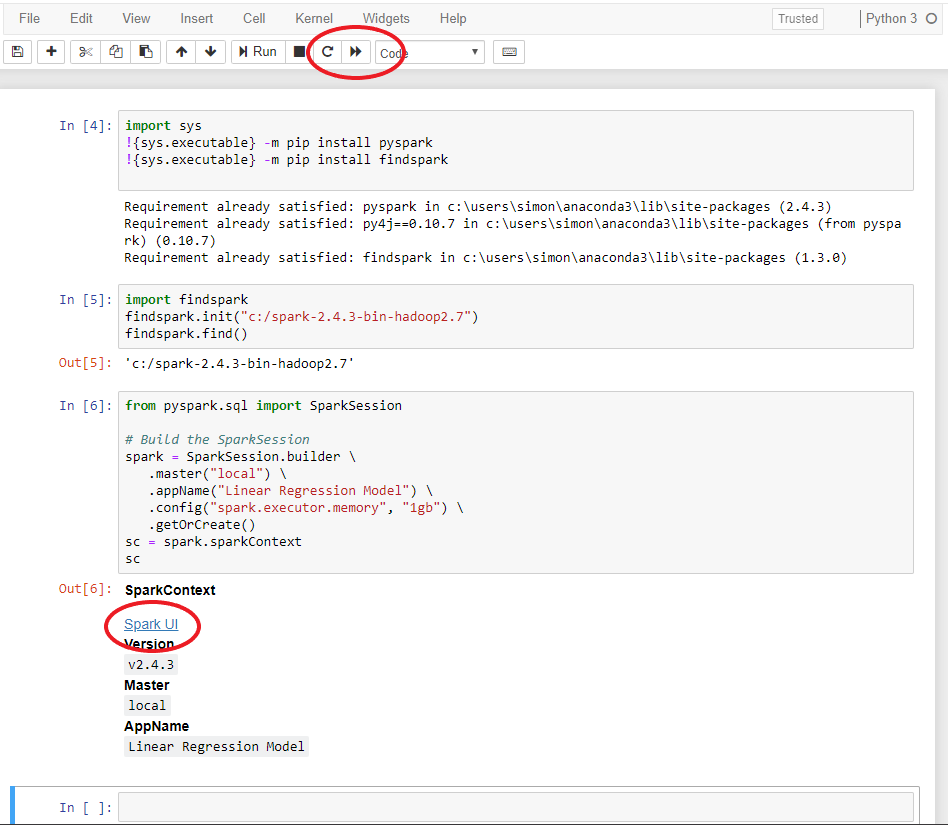

conda install -c conda-forge findspark or. A matplotlib is an open-source Python library which used to plot the graphs. The Spark UI link will take you to the Spark management UI. First, we need to install the Anaconda graphics installer from its official site. This will install pyspark and findspark modules (may take a few minutes) and create a Spark Context for running cluster jobs. To run the test click the restart kernel and run all > button (confirm the dialogue box). NOTE: If you are using this with a Spark standalone cluster you must ensure that the version (including minor version) matches or you may experience odd errors. Select spark test and it will open the notebook.

#Pyspark anaconda windows full version

You can download the full version of Spark from the Apache Spark downloads page. This Python packaged version of Spark is suitable for interacting with an existing cluster (be it Spark standalone, YARN, or Mesos) - but does not contain the tools required to set up your own standalone Spark cluster.

anaconda50hadoop contains the packages consistent with the Python 3.6 template plus. In the editor session there are two environments created. Hadoop Distributed File System (HDFS) Hive. The Python packaging for Spark is not intended to replace all of the other use cases. The Hadoop/Spark project template includes sample code to connect to the following resources, with and without Kerberos authentication: Spark. Using PySpark requires the Spark JARs, and if you are building this from source please see the builder instructions at Installation of Packages and finish of Installation. After the installation begins you will see this: 3. To Install the Jupyter, the command is as given below: python -m pip install jupyter. Walkthroughs work best on a tablet or larger device. Then you will see this on your screen: After the updation of pip, the steps to install Jupyter Notebook are as follows: 1. Getting Started with Anaconda Individual Edition.

This packaging is currently experimental and may change in future versions (although we will do our best to keep compatibility). Find content in the Anaconda library, support, and more Home Settings Profile Settings Subscriptions Sign In Help. This README file only contains basic information related to pip installed PySpark. Guide, on the project web page Python Packaging You can find the latest Spark documentation, including a programming MLlib for machine learning, GraphX for graph processing,Īnd Structured Streaming for stream processing. Rich set of higher-level tools including Spark SQL for SQL and DataFrames, Supports general computation graphs for data analysis. High-level APIs in Scala, Java, Python, and R, and an optimized engine that Spark is a unified analytics engine for large-scale data processing.